How I Built a PostgreSQL SSO Proxy from Scratch

A deep dive into how I built a PostgreSQL proxy in Go that connects OAuth/OIDC-based authentication to the Postgres wire protocol, with JWT validation, service-account mapping, TLS, connection pooling, and query auditing.

A company where developers and product managers are required to be given access to the production database to edit rows sounds like a compliance nightmare. It was, but that wasn’t the problem I was looking to solve. I wanted to solve the issue of how we used to provide this access to people: we gave them a password for a single database user that everyone used, including the microservices themselves, and that user had a permission set of GRANT ALL on the entire database.

You could argue that each user could have an individual database user created and be handed the password to that, which would solve the issue of audit logging (who did what on the database), but it was just a management nightmare for us as the infra and security team. Hence we were looking for JIT tools like strongDM or Teleport which would solve these issues, but the cost of acquiring such a tool at our scale was going to be at least 100k USD per annum, which was not something I was comfortable asking my CTO to spend.

That’s when the idea of building an RDS proxy integrated with SSO came into the picture. This would allow us to give access to the databases via corporate email addresses only, with detailed audit logging of the queries run on the database.

This blog goes into detail on implementing this proxy in Go from scratch and maybe helps you understand the fundamentals of PostgreSQL and how it works.

The basic features I was aiming to build for my proxy were:

- Connection pooling

- SSL/TLS support

- SSO auth with Azure AD via Auth0

- Auditing and observability

The first step in building a proxy is to expose it as a server on a particular port (7777) actively listening for client connections. Once a connection is made, it is passed on to a goroutine to be processed.

Once the connection is made, the proxy then has to establish a connection to the actual PostgreSQL database. To understand how this happens exactly, we need to understand the communication protocol used by PostgreSQL.

PostgreSQL wire protocol (Frontend/Backend Protocol)

PostgreSQL uses a message-based protocol for communication between frontends and backends (clients and servers). The protocol is supported universally on TCP/IP port 5432.

In order to serve multiple clients efficiently, the server launches a new “backend” process for each client. In the current implementation, a new child process is created immediately after an incoming connection is detected. This is transparent to the protocol, however. For purposes of the protocol, the terms “backend” and “server” are interchangeable; likewise “frontend” and “client” are interchangeable.

Every PostgreSQL message has this format:

[Message Type (1 byte)] [Length (4 bytes)] [Message Body (Length-4 bytes)]

- Message type: single character identifying the message

- Length: 32-bit int

- Message body: the actual data

At the proxy level, the messages are referred to like this:

- Frontend messages — sent by the client; the proxy intercepts these and sends them to the DB

- Backend messages — sent by the server; the proxy intercepts these from the DB and sends them to the user

In our implementation, we use pgproto3, which is the encoder and decoder of the PostgreSQL wire protocol version 3.

Startup message

The Startup message in the PostgreSQL wire protocol is the very first message sent when a PostgreSQL connection is established, and it is special enough that it has to be handled separately because:

- It has no message type.

- It’s always the first message in any PostgreSQL connection.

- It contains connection parameters such as username and database name.

Flow:

Client -> StartupMessage -> Proxy -> StartupMessage -> Database

Now that we have an understanding of the protocol, we can move ahead with the message flow. Once the connection is made to the proxy, a new pgproto3.Backend wrapping the raw TCP connection is created and the first call is pgconn.ReceiveStartupMessage().

The PostgreSQL startup message, as mentioned above, has no message type byte. Its format is [length:4 bytes][protocol_version:4 bytes][parameters]. pgproto3 handles this by reading the first 4 bytes, checking if they match the SSL request magic number (80877103), the cancel request magic (80877102), or a protocol version, and returning the appropriate concrete type.

There are three possible message types at this point, each handled by its own branch.

Case A: SSLRequest (*pgproto3.SSLRequest)

The client sends this request before the real startup message when it wants to establish TLS. The proxy must respond with a single byte before the client proceeds.

-

If TLS is not configured: the proxy sends the single byte

N(ASCII 78), indicating to the client that TLS is unavailable, and the client immediately sends the realStartupMessageon the same plaintext connection. -

If TLS is configured: the proxy sends

S(ASCII 83), indicating TLS is accepted, wraps the rawnet.Connin atls.Connusing the server’s certificate, and performs the TLS handshake.

Once done, pc.conn is replaced with the TLS connection and a new pgproto3.Backend connection is built over the TLS connection, and everything subsequent (password and queries) is encrypted.

Once one of the above is completed successfully, the StartupMessage is sent by the client to the proxy.

Case B: StartupMessage (*pgproto3.StartupMessage)

The StartupMessage contains user, database, application_name, client_encoding, and any other parameters the client sends.

Once the proxy receives this, it doesn’t send it to the PostgreSQL server. It instead sends back an AuthenticationCleartextPassword, just like how the PostgreSQL server would.

Case C: CancelRequest (*pgproto3.CancelRequest)

Cancel requests are entirely separate TCP connections, if enabled. A client receiving the SIGKILL or Ctrl+C opens a new connection to the proxy’s port and immediately sends a 16-byte cancel message without any SSL negotiation.

Structure:

Byte offset Size Value Meaning

----------------------------------------------------------

0 - 3 4 bytes 0x00000010 (16) Total message length

4 - 7 4 bytes 0x04D2162E Cancel magic number (80877102)

8 - 11 4 bytes <ProcessID> The backend PID to cancel

12 - 15 4 bytes <SecretKey> The secret key for that PID

Inside the proxy, this message must be assembled manually each time, like this:

buf := make([]byte, 16)

// Message length - 16 bytes

binary.BigEndian.PutUint32(buf[0:4], 16)

// Cancel request code - 80877102

binary.BigEndian.PutUint32(buf[4:8], 80877102)

// Process ID

binary.BigEndian.PutUint32(buf[8:12], cancel.ProcessID)

// Secret key

binary.BigEndian.PutUint32(buf[12:16], cancel.SecretKey)

ProcessID is the identification assigned to the process forked for handling the new connection made.

SecretKey is generated by PostgreSQL within the backend process when a new client connection is established. When PostgreSQL forks a dedicated backend process to handle the connection, it creates a random 32-bit integer and associates it with that process’s PID.

The SecretKey exists only for the purpose of query cancellation.

PostgreSQL then sends both the PID and the SecretKey to the connected client in a BackendKeyData message:

┌──────────────┬──────────────┬──────────────┬──────────────┐

│ 'K' (1 byte) │ Length │ ProcessID │ SecretKey │

│ type byte │ (4 bytes) │ (4 bytes) │ (4 bytes) │

└──────────────┴──────────────┴──────────────┴──────────────┘

Based on the ProcessID and SecretKey, the proxy must now identify the active connection and cancel it.

In my proxy, we store the activeConnections in a map keyed by uint64(ProcessID << 32 | SecretKey) — a bitfield combining both values into a single efficient map key.

If the connection is found to be active, the cancel request flow is called, which initiates a new TCP connection to the database, not from the connection pool. The raw 16-byte binary cancel message is sent and the connection is closed immediately.

PostgreSQL receives this, validates the PID/SecretKey against its own backend process table, and sends SIGINT to the matching backend process which aborts the in-flight query and returns an ErrorResponse with code 57014 to the client via the existing pooled connection.

Authentication inside the proxy

Now that the StartupMessage has been sent successfully, it’s time for the user authentication part of the proxy. I split it into 3 sequential phases:

- Open a temporary backend connection - A raw TCP connection to PostgreSQL, not from the pool, is made with the sole purpose of authentication.

-

Request a password from the client - The proxy sends

AuthenticationCleartextPasswordto the client. From the client’s perspective, the proxy is behaving like a PostgreSQL server requesting a password. The client sends back aPasswordMessagecontaining whatever was inPGPASSWORDor whatever was entered interactively.PostgreSQL generally uses SCRAM-SHA-256 or MD5, but the proxy here always asks the client for cleartext.

- Determine the authentication flow - The reason for getting the password as cleartext is for the proxy to inspect it and decide whether the password sent is a JWT token or a normal password.

JWT detection: check for the eyJ prefix (base64url encoding of {") and exactly 2 dots (the three-part JWT structure header.payload.signature). Simple heuristic, but correct for all JWTs.

Traditional password flow

The username the client sent is used along with the password. It’s basically a fallback for when someone configures a real PostgreSQL user in the proxy and connects with a traditional password.

Inside JWT validation

jwt.Parseis called with a key function. The key function:- Checks

t.Method.Alg()=="RS256"— rejects anything else - Extracts

kid(Key ID) from the token header - Calls

v.getPublicKey(kid)

- Checks

getPublicKey(kid)— this is where JWKS caching happens:- Acquires

RLock, checks ifkidexists inv.publicKeysandtime.Since(v.lastKeysFetch) < 1 hour - Cache hit: releases RLock, returns the key — no network call

- Cache miss: releases RLock, acquires full

Lock(write), double-checks again (another goroutine may have fetched while waiting), then callsfetchJWKS() fetchJWKS()makes a GET tohttps://<AUTH0_TENANT>/.well-known/jwks.jsonwith a 10-second timeout, filters forkty=RSA, use=sig, decodes base64url modulusNand exponentE, constructs*rsa.PublicKeyobjects, stores them all inv.publicKeyskeyed bykid, updatesv.lastKeysFetch- Fetch failure with stale keys: if the JWKS endpoint is down but old keys exist, logs a warning and returns the stale key — this is the graceful degradation path

- Fetch failure, no keys: returns error

- Acquires

-

jwt.Parseverifies the RS256 signature using the public key returned from the key function. If the signature is invalid, it returns an error. - Manual claim validation (after signature passes):

issclaim == configured issuer — exact string matchaudclaim == configured audience — handles bothstringand[]interface{}types (Auth0 can send either)emailclaim — must be present and non-emptysubclaim — must be present and non-emptyexpclaim —time.Now().After(oauthContext.ExpiresAt)— double-check (jwt.Parse also checks this but the manual check is explicit)

- Role extraction from

extractRoles(): triesclaims["role"]first, thenclaims["roles"]— handles both singular and plural claim names. Each can be astringor[]interface{}.

This validation process returns the email, role, and expiry time for the token, which is then used to map the role to a service account configured in the proxy. If no role matches, it ends up using the default role, which has read-only access.

Service accounts are basically users configured in the PostgreSQL database that the proxy uses to connect to the database, since the SSO-returned user does not actually exist inside PostgreSQL.

This also ensures we don’t have to create a PostgreSQL user for every user logging into the database, and the same goes for deletion as well. If a user is removed from Active Directory, they automatically do not have access to the database anymore.

Authentication with PostgreSQL

Now that the proxy has authenticated and authorised the incoming SSO user, it now needs to connect this user/client to the actual PostgreSQL database (backend).

This is done in the same way by sending a StartupMessage to PostgreSQL via a temporary connection, with one small change: the user field is replaced with the service account username before being sent to PostgreSQL. PostgreSQL never sees the original user@email.com that the client sent.

The proxy now enters a loop reading messages from PostgreSQL. This part is referred to as SASL authentication in the PostgreSQL protocol.

-

To begin a SASL authentication exchange, the PostgreSQL server sends an

AuthenticationSASLmessage. It includes a list of SASL authentication mechanisms that the server can accept, in the server’s preferred order.The default for this is usually either SCRAM-SHA-256 or MD5, rarely cleartext.

-

The proxy selects the first one in the priority of the supported mechanisms from the list, and sends a

SASLInitialResponsemessage to the server.If

AuthenticationSASLsends SCRAM-SHA-256, the proxy instantiates anxdg-go/scramSHA-256 client acting on behalf of the service account. The library is used to perform the full cryptographic exchange using the service account’s password. PostgreSQL never sees the JWT — it only sees the service account performing standard SCRAM.SCRAM-SHA-256 is a 3-round challenge-response protocol. The proxy first sends the

SASLInitialResponseand, with the help of thescramlibrary, starts the conversation by callingStep("")with an empty string — this means “generate the client-first message” (the opening move of SCRAM).

The above in code looks something like this:

client, err := scram.SHA256.NewClient(username, password, "")

if err != nil {

return logger.Errorf("failed to create SCRAM client: %w", err)

}

scramConversation = client.NewConversation()

initialResponse, err := scramConversation.Step("")

if err != nil {

return logger.Errorf("SCRAM initial step failed: %w", err)

}

logger.Debug("sending SCRAM initial response to backend")

err = frontend.Send(&pgproto3.SASLInitialResponse{

AuthMechanism: "SCRAM-SHA-256",

Data: []byte(initialResponse),

})

if err != nil {

return logger.Errorf("failed to send SASL initial response: %w", err)

}

The final payload is a structured ASCII string. It looks like:

n,,n=gprxy_admin,r=fyko+d2lbbFgONRv9qkxdawL

Breaking this down character by character:

| Part | Value | Meaning |

|---|---|---|

| n,, | n,, | GS2 header. n = no channel binding. ,, = no authzid |

| n= | n=gprxy_admin | The username (the n= attribute) |

, |

, |

Separator |

| r= | r=fyko+d2lbbFgONRv9qkxdawL | Client nonce |

authzid (Authorization Identity) is the SASL mechanism component defining the user identity that a client wants to act as.

Client nonce — a cryptographically random base64 string generated fresh for this authentication.

- PostgreSQL responds with the

AuthenticationSASLContinueserver-first message, a challenge. This is the message that makes SCRAM secure.

The payload contains r=<combined_nonce>,s=<salt>,i=<iterations>

Combined nonce — client nonce + server nonce appended together. PostgreSQL echoes back the client nonce and appends its own random suffix. The client must verify the prefix matches what it sent.

Salt — a random base64-encoded value stored in pg_authid alongside the user’s password hash. Different for every user.

Iteration count — how many times to apply PBKDF2 to derive the key. Higher = more expensive to brute-force. PostgreSQL defaults to 4096.

- The proxy responds with the client-final message, containing the client proof as

SASLResponse.

To send the response, the proxy first needs to do the cryptographic modifications to the request, for which it calls scramConversation.Step(serverFirstMessage).

The following cryptographic computations are done:

SaltedPassword = PBKDF2(SHA-256, password, salt, iterations, 32)ClientKey = HMAC-SHA-256(SaltedPassword, "Client Key")StoredKey = SHA-256(ClientKey)AuthMessage = client-first-message-bare + "," + server-first-message + "," + client-final-message-without-proofClientSignature = HMAC-SHA-256(StoredKey, AuthMessage)ClientProof = ClientKey XOR ClientSignature

The password never travels on the wire. Only ClientProof does — a value that proves you know the password without revealing it.

The final payload that is sent to the server looks like this:

c=biws,r=fyko+d2lbbFgONRv9qkxdawL3rfcNHYJY1ZVvWVs7j,p=dHzbZapWIk4jUhN+Ute9ytag9zjfMHgsqmmiz9AndVQ=

| Attribute | Example value | Meaning |

|---|---|---|

| c= | biws | Channel binding data biws is the base64 of "n,," (the GS2 header from the initial message). Since gprxy uses no channel binding, this is always biws. |

| r= | fyko+d2lbbFgONRv9qkxdawL3rfcNHYJY1ZVvWVs7j | The full combined nonce echoed back exactly as received from the server. |

| p= | dHzbZapWIk4jUhN+Ute9ytag9zjfMHgsqmmiz9AndVQ= | The ClientProof — the XOR of ClientKey and ClientSignature, base64-encoded. This is the proof of knowledge. |

-

PostgreSQL receives the above payload and does the below computations before sending the final server message

AuthenticationSASLFinal. - Verifies

r=still starts with the client nonce it saw earlier. - Computes the same

AuthMessageon its side using the stored password hash. - Computes

StoredKeyfrompg_authid. - Verifies the proof:

SHA-256(ClientKey)must equalStoredKey, which it can check without knowingClientKeydirectly.

This is the mutual authentication step: PostgreSQL proves to the proxy that it also knows the password. This prevents man-in-the-middle attacks.

The final payload that is sent to the client looks like this:

v=<ServerSignature>

- The proxy calls

scramConversation.Step(serverFinalMessage):

This internally computes the expected ServerSignature using its copy of SaltedPassword and the AuthMessage, then compares it to v= from the server. If they don’t match, it means it is connected to a rogue server.

-

Along with

AuthenticationSASLFinal, the server also sends anAuthenticationOkmessage. The same is passed along to the client, which makes it believe that the authentication is successful. -

PostgreSQL also sends several of these immediately after

AuthenticationOk:

server_version = 16.1

client_encoding = UTF8

server_encoding = UTF8

DateStyle = ISO, MDY

TimeZone = UTC

integer_datetimes = on

...

Each is forwarded to the client unchanged. The client caches these for the session.

BackendKeyDataandReadyForQueryare sent to the proxy by PostgreSQL, but these are never relayed to the client and the temporary connection is then terminated.

The reason behind this is that this whole authentication process was performed by a temporary connection that was terminated. Relaying that connection’s BackendKeyData, which is mainly used in cancelling requests by extracting the (PID, SecretKey), would result in either of these 3 scenarios:

-

Temp connection PID is already dead - The temp connection is closed immediately after auth. Its backend PostgreSQL process (

PID=12345) is gone. The client pressesCtrl+C. The proxy receives(PID=12345, SK=98765). It looks inactiveConnections— nothing is registered there with that pair. The cancel is silently dropped. The query keeps running forever. -

Temp connection PID is registered but wrong - Even if the proxy tried to register the temp connection’s key, what would it point to? The temp connection has no pool connection attached to it. There is no backend to cancel on. The proxy would forward the cancel to PostgreSQL targeting a process that is either dead or belongs to a completely different connection.

-

OS PID reuse - PIDs are finite. The OS can recycle

PID=12345to a completely different PostgreSQL backend process after the temp connection closes. The client’s cancel request, carrying that stale PID, could accidentally cancel a totally unrelated query running on a different client’s connection.

The only correct PID and SecretKey to give the client is the one belonging to the pool connection — the live backend process that is actually executing queries for this client.

Similarly, the ReadyForQuery message is also suppressed and not relayed to the client, as there is no pooled backend connection ready yet for it to start relaying queries.

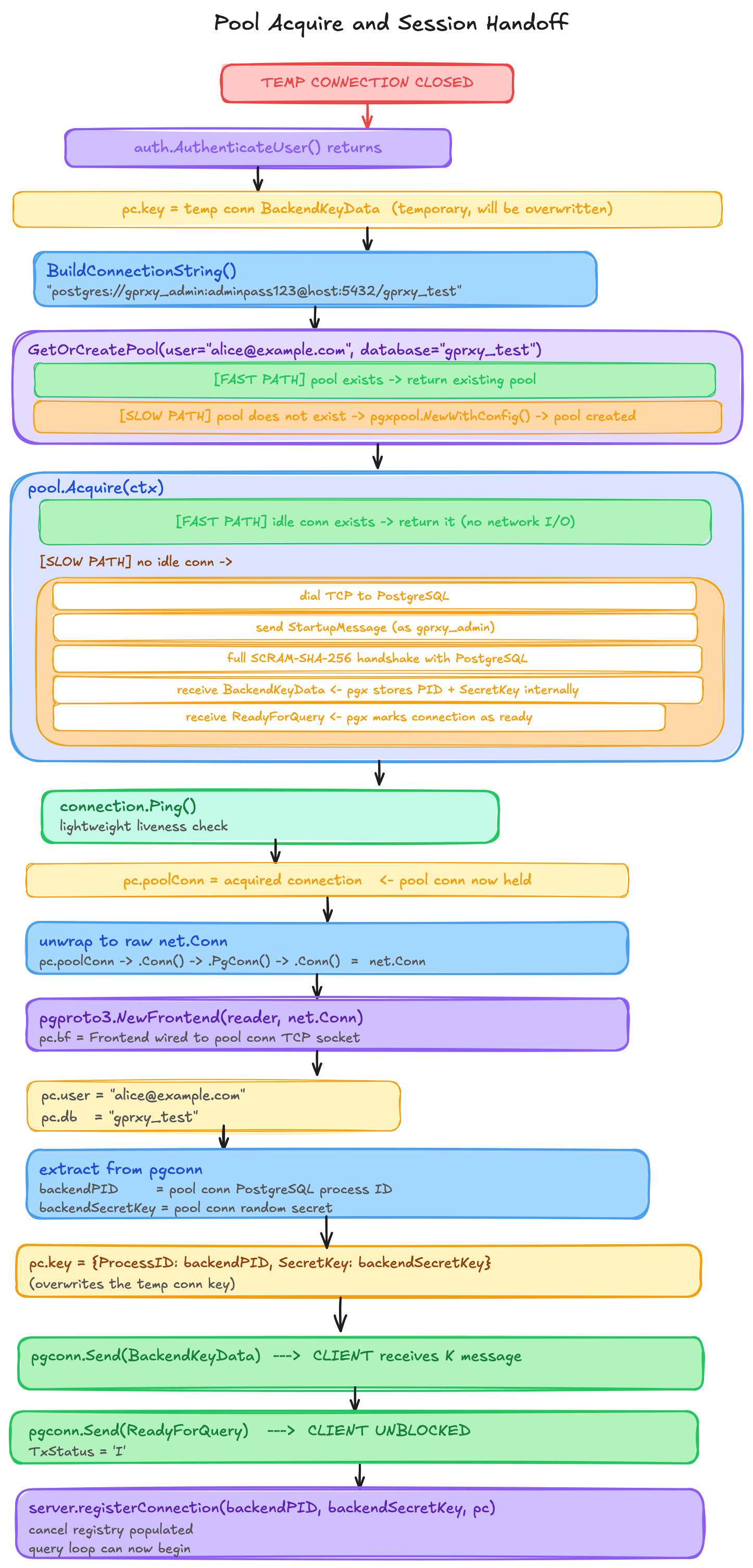

Post-Authentication Sequence

Now that the user is authenticated successfully with PostgreSQL, the proxy needs a connection from the pool to start running queries.

Let’s now talk about how connection pooling is implemented:

Layer 1: The Top-Level Registry poolManager

var (

poolManager = make(map[poolKey]*pgxpool.Pool)

poolMutex sync.RWMutex

)

This is a global process-wide map — one instance for the entire gprxy process, shared across all goroutines and all client connections. It lives for the lifetime of the process and is never torn down.

The Key: poolKey

type poolKey struct {

user string

database string

}

This is a Go struct used as a map key. Go allows any comparable type as a map key, and structs with only comparable fields are comparable. The two fields together form the composite key.

user here is the original client username — e.g. alice@example.com from the JWT, or bob from traditional auth. It is NOT the service account (gprxy_admin). This is set from msg.Parameters["user"] from the original StartupMessage.

database is the database name from the same StartupMessage — e.g. gprxy_test.

So the map looks like this after several clients connect:

poolManager = {

{user: "alice@example.com", database: "gprxy_test"} → *pgxpool.Pool (up to 5 conns)

{user: "alice@example.com", database: "analytics"} → *pgxpool.Pool (up to 5 conns)

{user: "bob@example.com", database: "gprxy_test"} → *pgxpool.Pool (up to 5 conns)

{user: "carol", database: "gprxy_test"} → *pgxpool.Pool (up to 5 conns)

}

Each *pgxpool.Pool value manages its own set of up to 5 real TCP connections to PostgreSQL.

The Lock: sync.RWMutex

poolMutex protects poolManager from concurrent reads and writes across goroutines. Since every client connection runs in its own goroutine, many goroutines can call GetOrCreatePool simultaneously.

A sync.RWMutex allows:

- Many goroutines to read simultaneously —

RLock()→RUnlock() - Only one goroutine to write, blocking all reads —

Lock()→Unlock()

The read path (pool already exists) is the fast path — it only holds a read lock for a microsecond. The write path (first connection for this key) is rare and takes a write lock briefly to insert the new pool.

Layer 2: The Double-Checked Locking Pattern

poolMutex.RLock()

pool, exists := poolManager[key]

poolMutex.RUnlock()

if exists {

return pool, nil

}

poolMutex.Lock()

defer poolMutex.Unlock()

if pool, exists := poolManager[key]; exists {

return pool, nil

}

This is a classic double-checked locking pattern. Here is why it needs two checks:

Scenario without the second check:

- Goroutine A:

RLock()→ pool not found →RUnlock() - Goroutine B:

RLock()→ pool not found →RUnlock() - Goroutine A:

Lock()→ creates pool →Unlock() - Goroutine B:

Lock()→ also creates a second pool → two pools for same key, one is lost

The second check inside the write lock prevents this. When goroutine B gets the write lock after A finishes, it checks again and finds the pool already there, so it returns it instead of creating a duplicate.

Layer 3: What pgxpool.Pool Actually Is

Each value in poolManager is a *pgxpool.Pool. This is not a simple slice of connections. It is a sophisticated object with its own goroutines and internal data structures.

Internal structure (inside pgxpool):

*pgxpool.Pool

├── config *pgxpool.Config (MaxConns, timeouts, etc.)

├── p *puddle.Pool[*pgxpool.connResource] ← the actual pool

│ ├── resources []poolResource (ring buffer of connections)

│ ├── cond *sync.Cond (for blocking Acquire calls)

│ └── ...

├── closeChan chan struct{} (signal pool close)

└── ...

pgxpool uses the puddle library internally for the actual pooling logic. puddle maintains a list of resources (connections) and a sync.Cond for goroutines waiting for an available connection.

The pool configuration gprxy sets:

config.MaxConns = defaultMaxConns // 5

config.MinConns = defaultMinConns // 0

config.MaxConnLifetime = defaultMaxConnLifetime // 1 hour

config.MaxConnIdleTime = defaultMaxConnIdleTime // 30 minutes

config.HealthCheckPeriod = defaultHealthCheckPeriod // 1 minute

config.ConnConfig.ConnectTimeout = defaultConnectTimeout // 5 seconds

What each setting actually controls:

MaxConns = 5

The hard ceiling. At most 5 TCP connections to PostgreSQL will ever exist for this (user, database) key. When all 5 are acquired (in use), the 6th pool.Acquire() call blocks — the calling goroutine is parked and put on a wait queue inside puddle. It will be woken up when one of the 5 connections is released.

MinConns = 0

No pre-warming. When the pool is created, zero connections to PostgreSQL are opened. The first pool.Acquire() on a fresh pool will always open a new TCP connection. This is lazy initialization — no connections consumed for idle users.

MaxConnLifetime = 1 hour

A background goroutine inside pgxpool periodically checks the age of every connection. Any connection older than 1 hour is closed and removed from the pool, even if it is idle and healthy. This forces periodic reconnection, which is important for:

- Picking up PostgreSQL configuration changes

- Rotating credentials if needed

- Preventing connections from being silently dropped by firewalls or load balancers that kill long-lived idle connections

MaxConnIdleTime = 30 minutes

Any connection that has been idle (not acquired by anyone) for 30 minutes is closed. This prevents the pool from holding open connections during quiet periods.

HealthCheckPeriod = 1 minute

Every minute, a background goroutine runs through all idle connections and pings each one. Any connection that fails the ping (PostgreSQL restarted, network blip) is removed from the pool. This keeps the pool clean so that Acquire() always returns a working connection.

ConnectTimeout = 5 seconds

When a new TCP connection to PostgreSQL needs to be opened, it must complete the entire startup handshake (TCP connect + SCRAM auth + ReadyForQuery) within 5 seconds. If it takes longer, the connection attempt is aborted and an error is returned.

Layer 4: *pgxpool.Conn as a Single Exclusive Handle

When pool.Acquire(ctx) returns, it gives back a *pgxpool.Conn. This is not a connection itself — it is a handle that:

- Wraps the underlying

*pgx.Conn - Marks that connection as acquired (in use) in the pool’s internal state

- Provides a

Release()method to return it

*pgxpool.Conn

├── p *pgxpool.Pool (pointer back to parent pool)

└── res *puddle.Resource (the resource being held)

└── value *pgxpool.connResource

└── conn *pgx.Conn (the actual connection)

The connection is exclusively held — the pool will not give the same underlying *pgx.Conn to any other goroutine while one *pgxpool.Conn holds it. This is what makes it safe for pc.bf.Send() and pc.bf.Receive() to call directly into the TCP socket without any additional locking. The pool’s ownership model guarantees single-writer, single-reader.

How gprxy digs through the layers to get the raw socket

underlyingConn := pc.poolConn.Conn().PgConn().Conn()

bf := pgproto3.NewFrontend(pgproto3.NewChunkReader(underlyingConn), underlyingConn)

The chain of unwrapping:

pc.poolConn *pgxpool.Conn

.Conn() *pgx.Conn (higher-level pgx connection)

.PgConn() *pgconn.PgConn (low-level wire protocol connection)

.Conn() net.Conn (raw TCP socket)

gprxy bypasses all of pgx’s query execution machinery and talks directly to the raw TCP socket. It wraps it with a pgproto3.Frontend to get PostgreSQL wire protocol serialization/deserialization. This is why gprxy can forward arbitrary protocol messages — because it is working at the wire level, not through pgx’s Query()/Exec() API.

However, this creates a subtle tension: pgx still thinks it “owns” this connection and its internal state machine. gprxy is now sending bytes on the socket that pgx does not know about. This is why the fullResetBeforeRelease step is critical — pgx’s internal state may be out of sync with the actual PostgreSQL session state after gprxy forwards arbitrary queries, and ROLLBACK + DISCARD ALL restores the PostgreSQL session to a clean state before pgx takes back ownership.

Layer 5: Connection Lifecycle State Machine

For a single physical PostgreSQL connection managed by pgxpool, the happy-path lifecycle looks like this:

Important nuance: after pgxpool.NewWithConfig(), the pool object exists immediately, but with MinConns=0 there may be zero physical PostgreSQL connections inside it until the first Acquire() needs one. Also, Release() does not always transition to [IDLE] — if the connection is broken, expired, or otherwise not reusable, pgxpool closes it instead of returning it to the free list.

Layer 6: The Acquire Flow

connection, err := pool.Acquire(context.Background())

if err != nil {

return nil, logger.Errorf("error while acquiring connection from the database pool: %w", err)

}

err = connection.Ping(context.Background())

if err != nil {

connection.Release()

return nil, logger.Errorf("could not ping database: %w", err)

}

return connection, nil

pool.Acquire(ctx) internally does:

- Lock puddle’s internal mutex

- Check the free list (idle connections):

- If found: remove from free list, mark as acquired, return it

- If free list empty, check total count vs

MaxConns:- If below

MaxConns: unlock, open a new connection (dial + SCRAM auth), lock again, add to acquired set, return - If at

MaxConns: add this goroutine to a wait queue (sync.Cond.Wait()), unlock, sleep - When another goroutine calls

Release(): it callsCond.Signal(), waking one waiter, which retries the acquire

- If below

After Acquire() returns, gprxy calls Ping(). This is an extra safety net on top of the health check background goroutine. Between the health check goroutine’s last check (up to 1 minute ago) and right now, the connection could have gone stale. Ping() sends a minimal no-op to PostgreSQL and waits for a response.

If Ping() fails, Release() is called immediately — but gprxy does not put a broken connection back. pgxpool’s Release() is smart: if the underlying connection returns an error, it destroys the connection instead of returning it to the free list. So after connection.Release() on a failed ping, the pool size decreases by 1, and the next Acquire() will open a fresh connection.

Layer 7: The LogPoolStats Observation Window

After every successful AcquireConnection, gprxy calls:

pool.LogPoolStats(user, database)

func LogPoolStats(user, database string) {

stats := pool.Stat()

logger.Debug("pool stats for [%s,%s] - total: %d, acquired: %d, idle: %d", user, database, stats.TotalConns(), stats.AcquiredConns(), stats.IdleConns())

}

pool.Stat() returns a snapshot of:

TotalConns()— total live connections (acquired + idle), max is 5AcquiredConns()— currently held by goroutines (including this one just acquired)IdleConns()— back in free list, available immediately

TotalConns = AcquiredConns + IdleConns always. For example:

pool stats for [alice@example.com,gprxy_test] - total: 3, acquired: 2, idle: 1

This means 3 real TCP connections exist to PostgreSQL, 2 are in use by active client connections, and 1 is sitting idle waiting to be acquired.

Layer 8: Release and Reset

When a client disconnects, the defer block in handleConnection runs:

if pc.poolConn != nil {

err := fullResetBeforeRelease(pc)

if err != nil {

logger.Error("error while releasing connection back to the pool: %v", err)

}

pc.poolConn.Release()

}

fullResetBeforeRelease runs two SQL commands through pgx’s normal execution path (not through pc.bf), because at this point gprxy is done forwarding arbitrary messages:

func fullResetBeforeRelease(connection *Connection) error {

_, err := connection.poolConn.Exec(context.Background(), "ROLLBACK")

// ...

_, err = connection.poolConn.Exec(context.Background(), "DISCARD ALL")

// ...

return nil

}

ROLLBACK rolls back any open transaction. Without this, if a client died mid-BEGIN, the pool connection would return to the free list still inside a transaction. Any rows it had locked would remain locked. The next client to get this connection would start their first query inside someone else’s transaction.

DISCARD ALL is a PostgreSQL supercommand that resets everything about the session in one round trip:

- All

SETvariables back to defaults - All named prepared statements deallocated

- All open cursors closed

- All

LISTENsubscriptions removed - All advisory locks released

- All cached query plans discarded

After these two commands, the pool connection’s PostgreSQL session is byte-for-byte identical to a fresh connection. pgx’s internal state may still be slightly stale (it didn’t observe gprxy’s arbitrary wire-level queries), but the actual PostgreSQL session is clean.

Then poolConn.Release() is called. Inside pgxpool, this:

- Locks puddle’s mutex

- Moves the resource from the acquired set back to the idle free list

- Calls

Cond.Signal()to wake any goroutine blocking onAcquire() - Unlocks

The connection is now available for the next client that calls AcquireConnection.

Data Structure Map

PROCESS GLOBAL STATE

─────────────────────────────────────────────────────────────────────

poolManager: map[poolKey]*pgxpool.Pool

│

├── key: {user:"alice@example.com", database:"gprxy_test"}

│ └── *pgxpool.Pool

│ ├── MaxConns: 5

│ ├── free list (idle):

│ │ └── *pgx.Conn [TCP socket to pg:5432, PID=1001, SK=11111]

│ ├── acquired set (in use):

│ │ ├── *pgx.Conn [TCP socket to pg:5432, PID=1002, SK=22222] ← held by alice session 1

│ │ └── *pgx.Conn [TCP socket to pg:5432, PID=1003, SK=33333] ← held by alice session 2

│ └── background goroutines:

│ ├── health checker (every 1 minute)

│ └── idle reaper (checks MaxConnIdleTime/MaxConnLifetime)

│

├── key: {user:"bob@example.com", database:"gprxy_test"}

│ └── *pgxpool.Pool

│ ├── MaxConns: 5

│ ├── free list (idle): [empty]

│ └── acquired set (in use):

│ └── *pgx.Conn [TCP socket to pg:5432, PID=1004, SK=44444] ← held by bob session 1

│

└── poolMutex: sync.RWMutex (guards the map above)

PER-CLIENT-CONNECTION STATE (one per active client goroutine)

─────────────────────────────────────────────────────────────────────

*Connection (alice session 1)

├── conn: net.Conn → TCP socket to alice's psql process

├── poolConn: *pgxpool.Conn → exclusively holds PID=1002 conn above

├── bf: *pgproto3.Frontend → wired to PID=1002's raw TCP socket

├── user: "alice@example.com"

├── db: "gprxy_test"

└── key: BackendKeyData{ProcessID:1002, SecretKey:22222}

↑ also registered in server.activeConnections

Key Design Properties and Tradeoffs

1. Per-(client-user, database) pools, not a single global pool

Each unique (user, database) pair gets its own pool. This means Alice and Bob each have their own separate bucket of connections. This has pros and cons:

- Pro: isolation — Alice exhausting her 5 connections doesn’t block Bob

- Con: the worst case total connections to PostgreSQL =

(number of distinct users) × (number of distinct databases) × 5. With 100 users each connecting to 2 databases that’s 1000 PostgreSQL backend processes — potentially catastrophic at scale.

A shared global pool per database would be more scalable, but then all users compete for the same connections.

2. The pool key uses client identity, not service account

All connections in a pool connect to PostgreSQL as gprxy_admin (from BuildConnectionString), but the pool is keyed on the client user (alice@example.com). This means two different clients who both map to gprxy_admin still have completely separate pools. This provides isolation but wastes connections — both pools independently open connections as the same PostgreSQL user.

3. MinConns=0 means cold start latency

The first connection for any (user, database) pair always pays the full TCP dial + SCRAM handshake cost on the hot path (while the client is waiting). Subsequent connections are fast (free list lookup). If MinConns were 1, the pool would pre-open a connection at creation time, eliminating this latency at the cost of always holding an open connection even for inactive users.

4. The pool is never closed

There is no code path in gprxy that calls pool.Close(). Once a pool exists in poolManager, it lives forever until the process exits. The idle reaper and MaxConnIdleTime handle draining unused connections from within each pool, but the pool object and its entry in poolManager persist. This is a minor memory leak for transient users — if 10,000 different users each connect once, poolManager will have 10,000 entries (each being a small pool object with zero connections) forever.

Now that we have a detailed understanding of connection pooling, let’s get back to the authenticated user who now makes a request for a connection from the pool:

Case A — Pool for this (user, database) key already exists: Read lock acquired, pool found, read lock released. No write lock, no allocation, extremely fast. The existing *pgxpool.Pool object is returned.

Case B — Pool does not exist yet (first connection for this user+database combination):

Write lock acquired. The pool configuration struct is built and pgxpool.NewWithConfig is called. With MinConns=0, the pool does not open any connections to PostgreSQL at creation time. It creates the management infrastructure (the pool object, its background goroutines for health checking, etc.) but no actual TCP connections to PostgreSQL yet. The pool is stored in the global poolManager map.

Then comes the part of making an actual connection to the PostgreSQL database. The pool checks its internal free list:

- If an idle connection exists: it returns it immediately and marks it as in-use.

- If no idle connection exists but the pool is below

MaxConns(5): it opens a new TCP connection to PostgreSQL, performs the full PostgreSQL startup handshake (this includes SCRAM-SHA-256 authentication using the DSN credentials), and returns the resulting connection. - If the pool is at

MaxConnswith none idle: it blocks until one is released by another goroutine.

When a new connection is opened, the full PostgreSQL wire protocol handshake happens inside pgx transparently — StartupMessage, SCRAM exchange, AuthenticationOk, ParameterStatus, BackendKeyData, ReadyForQuery. pgx handles all of this internally and stores the resulting PID and SecretKey on the connection object. This is where the pool connection’s BackendKeyData originates.

After acquire, a ping is sent — a cheap SELECT 1 equivalent at the protocol level. If the connection was idle and the PostgreSQL server closed it server-side (e.g. idle_in_transaction_session_timeout), the ping will fail, the connection is discarded, and AcquireConnection returns an error. This prevents handing the client a dead connection.

After this call returns, pc.poolConn is a live, valid, exclusively-held *pgxpool.Conn.

What the client is doing during all of this: still blocked. It sent StartupMessage, received AuthenticationOk + ParameterStatus messages, and is waiting for BackendKeyData + ReadyForQuery. It has no idea any of this infrastructure work is happening.

pgproto3.NewChunkReader(underlyingConn) wraps the TCP socket in a buffered reader that knows how to read PostgreSQL wire protocol message boundaries. pgproto3.NewFrontend(reader, underlyingConn) creates a Frontend — an object that speaks PostgreSQL from the client side (i.e. it sends queries and reads responses, opposite of Backend which speaks from the server side).

This *pgproto3.Frontend is what pc.bf is. Going forward, every call to pc.bf.Send(msg) writes PostgreSQL wire bytes directly onto the pool connection’s TCP socket to PostgreSQL. Every call to pc.bf.Receive() reads PostgreSQL response bytes off that same socket.

pc.user and pc.db are stored for logging purposes throughout the query loop.

pgconn.PID() and pgconn.SecretKey() return values that pgx stored internally when it completed the pool connection’s startup handshake. These are the PID and SecretKey of the live PostgreSQL backend process that is holding this pool connection on the other end.

pc.key is now overwritten — the dead temp connection’s key is replaced with the live pool connection’s key. This is the correct key that must be given to the client and registered in the cancel registry.

Send BackendKeyData to the client

pgconn here is the *pgproto3.Backend connected to the client — the server-side view of the client connection. pgconn.Send(pc.key) serializes the BackendKeyData struct into the PostgreSQL wire format and writes it to the client’s TCP socket:

K (1 byte - message type 'K')

00 00 00 0C (4 bytes - length = 12)

XX XX XX XX (4 bytes - ProcessID)

YY YY YY YY (4 bytes - SecretKey)

The client receives this, parses it, and stores (ProcessID, SecretKey) internally. Its driver will use this if the user or a timeout triggers a query cancellation.

Send ReadyForQuery to the client

TxStatus: 'I' means “idle, not in a transaction”. This is always correct here because the pool connection was just acquired and either just opened or just had DISCARD ALL run on it — it is guaranteed to be in idle state.

Wire format:

Z (1 byte - message type 'Z')

00 00 00 05 (4 bytes - length = 5)

49 (1 byte - 'I' = idle, or 'T' = in transaction, 'E' = error state)

This message unblocks the client. The client’s driver, which has been sitting in ReadyForQuery wait since it sent the StartupMessage, now receives this and considers the connection fully established. It returns the connection object to the application. The application can now call conn.Query(...) or conn.Exec(...).

This ReadyForQuery is synthesized by gprxy itself — it is not forwarded from PostgreSQL. gprxy is lying to the client in the best possible way: the client believes it just completed startup with a PostgreSQL server, but the ReadyForQuery came from the proxy, which only sent it after confirming the pool connection is live and pc.bf is wired up and ready.

Register in the cancel registry

registerConnection stores the *Connection pointer in server.activeConnections keyed by the bit-packed uint64 of (ProcessID << 32 | SecretKey). This happens after ReadyForQuery is sent because the cancel registry only needs to be populated before a query actually runs — and no query can run until the client receives ReadyForQuery and sends the next message. There is no race condition here because both happen in the same goroutine sequentially.

What happens between authentication and ReadyForQuery

Every piece must happen in this exact order. If the pool connection fails (PostgreSQL down, max connections reached, ping fails), gprxy sends ErrorResponse with SQLSTATE 08006 to the client and the connection is torn down cleanly. The client never enters a half-connected state.

Now the proxy is at the query loop entry point, where the user runs queries and the proxy bridges the gap between the user and the running PostgreSQL instance.

Here is the complete deep dive into the entire query loop and cleanup.

The Query Loop Entry Point

After handleStartupMessage returns, control lands here:

logger.Debug("entering query handling loop")

for {

err := pc.handleMessage(pgc)

if err != nil {

logger.Debug("query handling terminated: %v", err)

return

}

}

This is an unconditional for {} — it runs forever until handleMessage returns a non-nil error. There is no break condition, no timeout, no idle check. The goroutine lives as long as the client is connected.

pgc is the *pgproto3.Backend connected to the client socket. It is the same one used during startup. It is passed into every handleMessage call.

Anatomy of One Cycle

Part A: Reading the client message

func (pc *Connection) handleMessage(client *pgproto3.Backend) error {

msg, err := client.Receive()

if err != nil {

return logger.Errorf("client receive error: %w", err)

}

client.Receive() calls into the TCP socket read buffer. The goroutine is parked by the OS here — it is not spinning, not consuming CPU. It wakes only when bytes arrive on the socket.

pgproto3.Backend.Receive() reads the first byte (the message type identifier), then reads the 4-byte length field, then reads exactly length - 4 more bytes to get the full payload, then deserializes everything into a typed Go struct and returns it.

If the client closes the TCP socket (Ctrl+C, process killed, network drop), the OS delivers an EOF to the read call. client.Receive() returns an error wrapping io.EOF, handleMessage returns that error, the outer loop sees non-nil and calls return, which exits handleConnection and triggers the defer.

Part B: Classify and log

key := pc.poolConn.Conn().PgConn().SecretKey()

pid := pc.poolConn.Conn().PgConn().PID()

switch query := msg.(type) {

case *pgproto3.Query:

logger.Info("[%s] query: %s", pc.user, query.String)

logger.Debug("query connection PID=%d, secret_key=%d", pid, key)

case *pgproto3.Parse:

logger.Debug("[%s] parse: statement='%s' query='%s'", pc.user, query.Name, query.Query)

case *pgproto3.Describe:

objectType := "statement"

if query.ObjectType == 'P' {

objectType = "portal"

}

logger.Debug("[%s] describe: %s='%s'", pc.user, objectType, query.Name)

case *pgproto3.Bind:

paramCount := len(query.Parameters)

logger.Debug("[%s] bind: portal='%s' statement='%s' params=%d", pc.user, query.DestinationPortal, query.PreparedStatement, paramCount)

case *pgproto3.Execute:

maxRows := "unlimited"

if query.MaxRows > 0 {

maxRows = fmt.Sprintf("%d", query.MaxRows)

}

logger.Debug("[%s] execute: portal='%s' max_rows=%s", pc.user, query.Portal, maxRows)

case *pgproto3.Sync:

logger.Debug("[%s] sync: transaction boundary", pc.user)

case *pgproto3.Terminate:

logger.Info("[%s] client disconnecting gracefully", pc.user)

return logger.Errorf("client terminated")

default:

logger.Debug("[%s] unknown message type: %T", pc.user, query)

}

The switch is logging only — it does not change the message or alter routing. Every message type has its own log line.

The Terminate case is the only one that exits early before forwarding. It returns an error immediately — the loop will exit. Notice it returns before the pc.bf.Send(msg) call below. The Terminate message is never forwarded to PostgreSQL. PostgreSQL doesn’t need to be told — the pool connection is not being closed, it is going back to the pool. PostgreSQL will only learn the session ended when gprxy runs ROLLBACK + DISCARD ALL later.

The two lines at the top of the switch:

key := pc.poolConn.Conn().PgConn().SecretKey()

pid := pc.poolConn.Conn().PgConn().PID()

These are read on every single message, but they are only used in the Query log line. This is slightly wasteful — two pointer dereferences on every message regardless of type.

Part C: Forward the message to PostgreSQL

err = pc.bf.Send(msg)

if err != nil {

return logger.Errorf("unable to send query to backend: %w", err)

}

pc.bf is the *pgproto3.Frontend wired to the pool connection’s raw TCP socket. Send(msg) takes the Go struct, serializes it back into PostgreSQL wire protocol bytes, and writes them to that socket.

This is a complete passthrough — gprxy does no SQL parsing, no query analysis, no modification of any kind. The bytes that PostgreSQL receives are byte-for-byte identical to what the client sent (re-serialized through pgproto3, but semantically identical).

The two PostgreSQL query protocols work differently here:

Simple Query — one message, one forward:

- Client sends one

Querymessage with raw SQL text handleMessageis called once- One

pc.bf.Send(query)forwards it relayBackendResponsecollects all responses untilReadyForQuery

Extended Query — multiple messages, multiple forwards:

- Client sends

Parse,Bind,Describe,Execute,Sync— each as a separate message handleMessageis called once per message — 5 separate calls for 5 messages- Each call forwards its one message and then calls

relayBackendResponse - Only

Syncproduces aReadyForQueryfrom PostgreSQL — the other messages getParseComplete,BindComplete, etc.

This means for an extended query cycle, relayBackendResponse is called 5 times but returns nil (continues to outer loop) after each intermediate response, and finally returns nil after the ReadyForQuery that follows Sync.

Part D: Check for Terminate again

if _, ok := msg.(*pgproto3.Terminate); ok {

return logger.Errorf("connection terminated")

}

This is actually dead code in practice — the Terminate case in the switch already returned before reaching here. This is a redundant safety net. If somehow execution reaches here with a Terminate message, it exits before calling relayBackendResponse (which would block forever waiting for a backend response that will never come, since Terminate doesn’t produce one).

Part E: Relay all backend responses

return pc.relayBackendResponse(client)

relayBackendResponse: The Response Pump

func (pc *Connection) relayBackendResponse(client *pgproto3.Backend) error {

for {

msg, err := pc.bf.Receive()

if err != nil {

return logger.Errorf("backend receive error: %w", err)

}

err = client.Send(msg)

if err != nil {

return logger.Errorf("client send error: %w", err)

}

switch msgType := msg.(type) {

case *pgproto3.ReadyForQuery:

logger.Debug("query completed, ready for next query (status: %c)", msgType.TxStatus)

return nil

case *pgproto3.ErrorResponse:

logger.Warn("query error: %s (code: %s)", msgType.Message, msgType.Code)

case *pgproto3.CommandComplete:

logger.Debug("command completed: %s", msgType.CommandTag)

}

}

}

This loop does two things and only two things: read from backend, write to client. Every message is forwarded unconditionally before the switch even runs. The switch is for logging and for detecting the exit condition.

pc.bf.Receive() reads from the pool connection’s raw TCP socket — this is the raw PostgreSQL wire protocol coming from the database. Like client.Receive(), this parks the goroutine until bytes arrive.

client.Send(msg) serializes the message and writes it to the client socket.

The ReadyForQuery exit condition

ReadyForQuery is the only message that ends the relay loop. It returns nil, which propagates back to handleMessage returning nil, which causes the outer for loop to call handleMessage again.

ReadyForQuery carries a TxStatus byte:

'I'= idle (not in a transaction)'T'= in an open transaction (inside aBEGIN…COMMITblock)'E'= in a failed transaction (error occurred, needsROLLBACK)

gprxy forwards this status byte unchanged to the client. Client drivers use it to track transaction state.

What ErrorResponse does (and does not do)

Notice ErrorResponse is not a return condition. It is just logged as a warning. The loop continues reading until ReadyForQuery arrives. This is correct — PostgreSQL always sends ReadyForQuery after an error, even if it looks like:

ErrorResponse("relation 'foo' does not exist")

ReadyForQuery(TxStatus='I')

Both are forwarded. Both are received by the client. The error is delivered to the application through the driver’s normal error handling. The connection stays alive.

What CommandComplete does

Also just logged. The tag string tells what happened: "SELECT 5", "INSERT 0 1", "UPDATE 3", "DELETE 0", "BEGIN", "COMMIT", "ROLLBACK". Forwarded to client, loop continues.

Full response stream examples

SELECT * FROM users:

T RowDescription [id:int4, name:text, email:text] → forwarded

D DataRow [1, "Alice", "alice@example.com"] → forwarded

D DataRow [2, "Bob", "bob@example.com"] → forwarded

D DataRow [3, "Carol", "carol@example.com"] → forwarded

C CommandComplete "SELECT 3" → forwarded + logged

Z ReadyForQuery TxStatus='I' → forwarded + loop exits

INSERT INTO users VALUES (...):

C CommandComplete "INSERT 0 1" → forwarded + logged

Z ReadyForQuery TxStatus='I' → forwarded + loop exits

SELECT * FROM nonexistent:

E ErrorResponse code="42P01", message="relation 'nonexistent' does not exist" → forwarded + logged as warn

Z ReadyForQuery TxStatus='I' → forwarded + loop exits

BEGIN followed by INSERT:

[handleMessage called for "BEGIN"]

C CommandComplete "BEGIN" → forwarded

Z ReadyForQuery TxStatus='T' → forwarded + loop exits

[handleMessage called for "INSERT INTO..."]

C CommandComplete "INSERT 0 1" → forwarded

Z ReadyForQuery TxStatus='T' → forwarded + loop exits (still in transaction)

[handleMessage called for "COMMIT"]

C CommandComplete "COMMIT" → forwarded

Z ReadyForQuery TxStatus='I' → forwarded + loop exits (back to idle)

How the Loop Ends: All Exit Paths

handleMessage returns a non-nil error in these cases:

| Cause | Where | Error |

|---|---|---|

Client sends Terminate |

switch case, line 51 | "client terminated" |

| Client TCP socket closed (EOF, crash, network drop) | client.Receive(), line 15 |

wraps io.EOF |

| Failed to write to client | client.Send() in relay, line 78 |

"client send error" |

| Failed to read from backend | pc.bf.Receive() in relay, line 74 |

"backend receive error" |

| Failed to forward to backend | pc.bf.Send(), line 59 |

"unable to send query to backend" |

All of them propagate to the outer loop:

for {

err := pc.handleMessage(pgc)

if err != nil {

logger.Debug("query handling terminated: %v", err)

return // ← exits handleConnection

}

}

return from handleConnection triggers the defer.

The defer Cleanup

The defer was registered at the very start of handleConnection before any work began:

defer func() {

if err := pc.conn.Close(); err != nil {

logger.Error("error closing client connection: %v", err)

}

if pc.poolConn != nil {

err := fullResetBeforeRelease(pc)

if err != nil {

logger.Error("error while releasing connection back to the pool: %v", err)

}

pc.poolConn.Release()

logger.Debug("released connection back to pool")

}

if pc.key != nil && pc.server != nil {

pc.server.unregisterConnection(pc.key.ProcessID, pc.key.SecretKey, pc)

}

logger.Info("connection closed")

}()

Go’s defer runs even if the function panics. The three steps always execute in order.

Cleanup Step 1: Close the client TCP socket

if err := pc.conn.Close(); err != nil {

logger.Error("error closing client connection: %v", err)

}

pc.conn is the net.Conn to the client. Close() sends a TCP FIN to the client and releases the OS file descriptor. If the client already closed the connection (which is why the loop exited), Close() still runs and may return an error like use of closed network connection — that error is logged but does not stop cleanup.

Cleanup Step 2a: fullResetBeforeRelease

func fullResetBeforeRelease(connection *Connection) error {

_, err := connection.poolConn.Exec(context.Background(), "ROLLBACK")

if err != nil {

logger.Debug("unable to rollback: %v", err)

return err

}

_, err = connection.poolConn.Exec(context.Background(), "DISCARD ALL")

if err != nil {

logger.Debug("unable to execute discard all: %v", err)

return err

}

return nil

}

These run through pgx’s normal Exec path — not through pc.bf. pgx handles the wire protocol for these two commands internally.

ROLLBACK:

If the client disconnected mid-transaction (crashed, network drop, or explicitly left a BEGIN open), the PostgreSQL session is still inside that transaction. Any rows it locked are still locked. Any changes are still pending. Without ROLLBACK, those locks would remain held until PostgreSQL’s idle_in_transaction_session_timeout fired (if configured) — potentially blocking other connections for minutes.

ROLLBACK explicitly ends the transaction. If there is no open transaction, ROLLBACK still succeeds — it just does nothing. So it is safe to always run.

DISCARD ALL:

This is a PostgreSQL supercommand that resets all session-level state in a single round trip. It is equivalent to running all of these simultaneously:

SET SESSION AUTHORIZATION DEFAULT; -- reset any SET ROLE/SET SESSION AUTHORIZATION

RESET ALL; -- all GUC parameters to defaults (timezone, search_path, etc.)

DEALLOCATE ALL; -- all named prepared statements

CLOSE ALL; -- all open cursors

UNLISTEN *; -- all LISTEN subscriptions

SELECT pg_advisory_unlock_all(); -- all advisory locks held by this session

DISCARD PLANS; -- all cached query plans

DISCARD SEQUENCES; -- cached nextval state for sequences

After DISCARD ALL, the PostgreSQL session is in a state identical to a brand new connection. The next client to acquire this pool connection gets a completely clean session — no leaked prepared statements, no inherited timezone settings, no open cursors, no stale plans.

Why both are needed even though DISCARD ALL includes rollback behavior:

DISCARD ALL itself will fail if called inside an active transaction — PostgreSQL returns ERROR: DISCARD ALL cannot run inside a transaction block. So ROLLBACK must run first to ensure no active transaction exists, then DISCARD ALL can safely run.

Cleanup Step 2b: poolConn.Release()

pc.poolConn.Release()

This returns the connection to the pgxpool free list. Internally pgxpool:

- Locks puddle’s internal mutex

- Moves the connection resource from the “acquired” set back to the “idle” free list

- Calls

sync.Cond.Signal()to wake any goroutine that is blocked waiting onpool.Acquire() - Unlocks

The connection is now available for the next client’s AcquireConnection call. No new TCP connection to PostgreSQL needs to be opened — the existing socket is reused.

Cleanup Step 3: unregisterConnection

if pc.key != nil && pc.server != nil {

pc.server.unregisterConnection(pc.key.ProcessID, pc.key.SecretKey, pc)

}

Inside unregisterConnection:

func (s *Server) unregisterConnection(processId, secretkey uint32, conn *Connection) {

s.connMutex.Lock()

defer s.connMutex.Unlock()

key := s.makeCancelKey(processId, secretkey)

delete(s.activeConnections, key)

}

Acquires the write lock on server.activeConnections, computes the uint64 key (ProcessID << 32 | SecretKey), deletes that entry from the map, releases the lock.

After this, if any stale cancel request arrives with this connection’s (PID, SecretKey), getConnectionForCancelRequest returns exists=false and the cancel is safely ignored.

The nil checks (pc.key != nil && pc.server != nil) protect against the case where the goroutine exits during or before startup — if authentication failed, pc.key was never set, so there is nothing to unregister.

What Happens to the Goroutine

After the defer completes:

logger.Info("connection closed")

// defer ends, function returns

// goroutine exits

The goroutine is returned to Go’s goroutine scheduler. Its stack memory is reclaimed. The *Connection struct it was holding becomes unreachable (assuming no other goroutine holds a reference) and will be garbage collected.

Back in server.Start(), this goroutine’s wg.Done() is called (via the outer defer wg.Done() in the wrapper goroutine), decrementing the WaitGroup counter. This matters for graceful shutdown — wg.Wait() will unblock only when all active connection goroutines have fully exited and cleaned up.

Full Picture of One Complete Connection Lifetime

This basically covers the full working of the gprxy proxy that I built over the last month or so. There are a couple of other things that haven’t been covered in this very very long blog post.

A couple of other things I didn’t cover

- The CLI layer - gprxy is a full CLI tool: The proxy is not just a server binary. It is a Cobra CLI application with three commands. Everything discussed so far was the

startcommand. There are two more pieces there: the entry point and the server. Feel free to check it out. - User login - the PKCE OAuth flow: This is the human user’s entry point. It performs a full PKCE (Proof Key for Code Exchange) OAuth 2.0 flow entirely from the terminal.

The full flow looks something like this:

- Generate

code_verifier(32 random bytes, base64url-encoded) - Generate

code_challenge = base64url(SHA256(code_verifier)) - Generate

state(24 random bytes) — CSRF protection - Build the authorization URL with all parameters

- Open the browser to that URL

- Start a local HTTP server on

:8085for the callback - User logs in via Auth0 SSO in the browser

- Auth0 redirects to

http://localhost:8085/callback?code=...&state=... - Callback handler verifies

statematches (CSRF check) - Exchange code +

code_verifierfor tokens viaPOST /oauth/token - Parse ID token for name/email

- Parse access token for roles

- Save all tokens to

~/.gprxy/credentials(mode0600)

What it still needs

The biggest missing piece is identity preservation — once authenticated, every query runs as gprxy_admin regardless of which human issued it. PostgreSQL row-level security, audit logs, and current_user all see the service account, not the person. The commented-out SET ROLE block was the right instinct but needs a proper implementation with SET SESSION AUTHORIZATION. Without this, the promise of per-user access control is incomplete.

Beyond that, the connect command is a skeleton — it connects but cannot run queries. The pool is per-client-user rather than global, which limits scalability. TLS certificate verification is disabled on the client side. There are no metrics, no health endpoint, and no query timeout. The credentials file stores tokens in plaintext with only filesystem permissions as protection.

All these are things I’m no longer interested in writing for, since I’ve moved on to more interesting projects for myself.

Final thoughts

gprxy is a PostgreSQL wire protocol proxy that replaces database password authentication with OAuth/OIDC identity. The fundamental problem it solves is this: PostgreSQL was designed for users who have database-level accounts and passwords. Modern engineering teams use SSO, JWT tokens, and identity providers. These two worlds do not speak to each other natively. gprxy bridges them.

If you like reading these kinds of fully detailed and architected blogs, do drop a comment or let me know through my socials. I guess nobody has the time to read through all of this anymore, but hey, I tried, and I would be massively grateful and happy even if a single person is able to learn something out of this.

Thank you, and off to the next thing. I’m working on a custom load balancer that decides where to send requests based on latency and RIF — cya then.